Leveraging VideoRAG for Company Knowledge Transfer

The Knowledge Transfer Challenge

In many companies, the issue isn’t a lack of data but how to manage and make it accessible to employees. One particularly pressing challenge is the transfer of knowledge from senior employees to younger generations. This is no small task, as it’s not just about transferring what’s documented in manuals or process guides, but the implicit knowledge that exists “between the lines”—the insights and experience locked within the minds of long-serving employees.

This challenge has been present across industries for many years, and as technology evolves, so do the solutions. With the rapid advancement of Artificial Intelligence (AI), particularly Generative AI, new possibilities for preserving and sharing this valuable company knowledge are emerging.

The Rise of Generative AI

Generative AI, especially Large Language Models (LLMs) such as OpenAI’s GPT-4o, Anthropic’s Claude 3.5 Sonnet, or Meta’s Llama3.2, offer new ways to process and make large amounts of unstructured data accessible. These models enable users to interact with company data via chatbot applications, making knowledge transfer more dynamic and user-friendly.

But the question remains — how do we make the right data accessible to the chatbot in the first place? This is where Retrieval-Augmented Generation (RAG) comes into play.

Retrieval-Augmented Generation (RAG) for Textual Data

RAG has proven to be a reliable solution for handling textual data. The concept is straightforward: all available company data is chunked and stored in (vector) databases, where it is transformed into numerical embeddings. When a user makes a query, the system searches for relevant data chunks by comparing the query’s embedding with the stored data.

With this method, there’s no need to fine-tune LLMs. Instead, relevant data is retrieved and appended to the user’s query in the prompt, ensuring that the chatbot’s responses are based on the company’s specific data. This approach works effectively for all types of textual data, including PDFs, webpages, and even image-embedded documents using multi-modal embeddings.

In this way, company knowledge stored in documents becomes easily accessible to employees, customers, or other stakeholders via AI-powered chatbots.

Extending RAG to Video Data

While RAG works well for text-based knowledge, it doesn’t fully address the challenge of more complex, process-based tasks that are often better demonstrated visually. For tasks like machine maintenance, where it’s difficult to capture everything through written instructions alone, video tutorials provide a practical solution without the need for time-consuming documentation-writing.

Videos offer a rich source of implicit knowledge, capturing processes step-by-step with commentary. However, unlike text, automatically describing a video is far from a straightforward task. Even humans approach this differently, often focusing on varying aspects of the same video based on their perspective, expertise, or goals. This variability highlights the challenge of extracting complete and consistent information from video data.

Breaking Down Video Data

To make knowledge captured in videos accessible to users via a chatbot, our goal must be to provide a structured process to convert videos into textual form that prioritizes extracting as much relevant information as possible. Videos consist of three primary components:

- Metadata: The handling of metadata is typically straightforward, as it is often available in structured textual form.

- Audio: Audio can be transcribed into text using speech-to-text (STT) models like OpenAI’s Whisper. For industry-specific contexts, it’s also possible to enhance accuracy by incorporating custom terminology into these models.

- Frames (visuals): The real challenge lies in integrating the frames (visuals) with the audio transcription in a meaningful way. Both components are interdependent — frames often lack context without audio explanations, and vice versa.

Tackling the Challenges of Video Descriptions

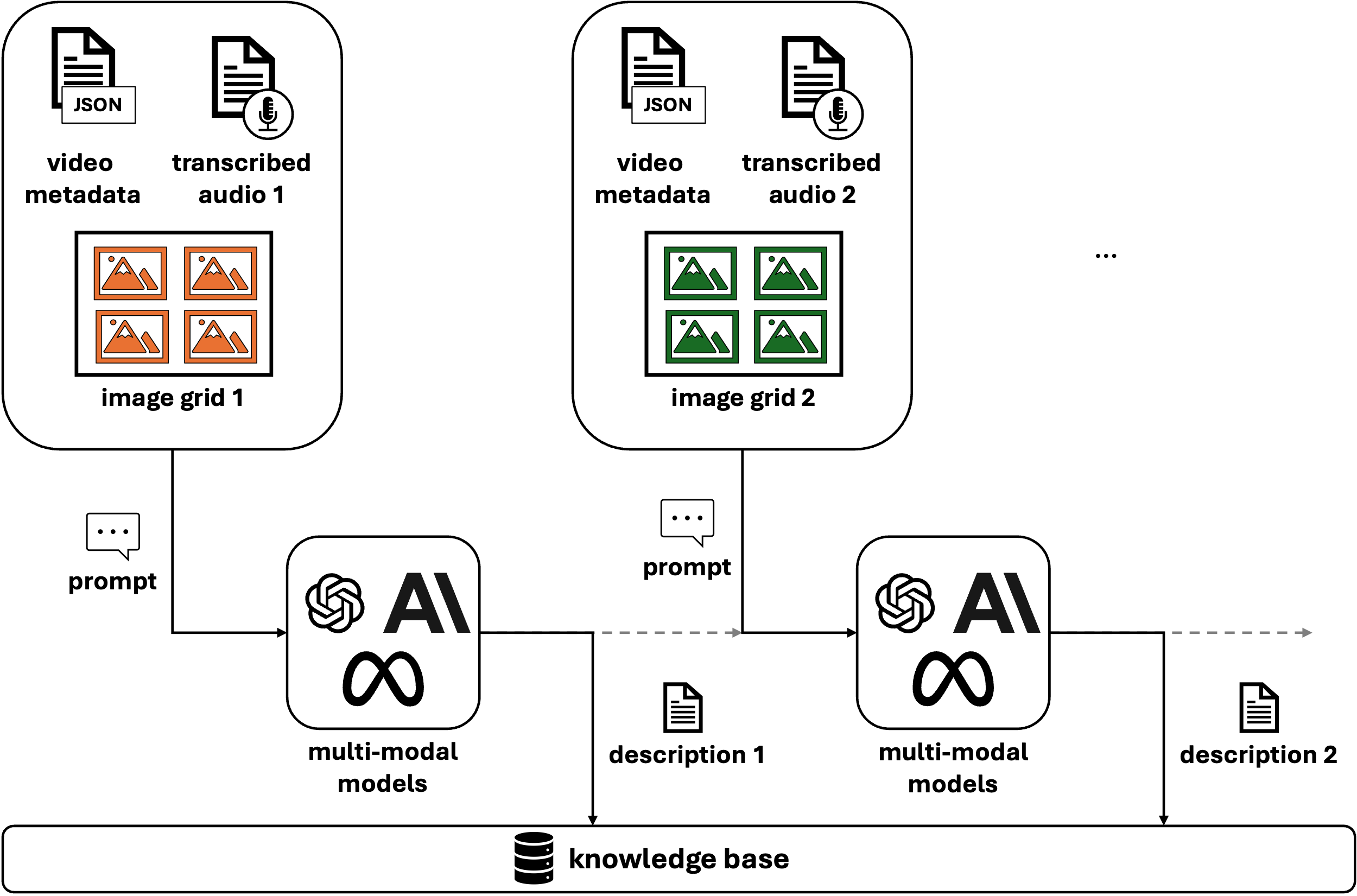

Figure 1: Chunking Process of VideoRAG.

When working with video data, we encounter three primary challenges:

- Describing individual images (frames).

- Maintaining context, as not every frame is independently relevant.

- Integrating the audio transcription for a more complete understanding of the video content.

To address these, multi-modal models like GPT-4o, capable of processing both text and images, can be employed. By using both video frames and transcribed audio as inputs to these models, we can generate a comprehensive description of video segments.

However, maintaining context between individual frames is crucial, and this is where frame grouping (often also referred to as chunking) becomes important. There are two primary methods for grouping frames:

- Fixed Time Intervals: A straightforward approach where consecutive frames are grouped based on predefined time spans. This method is easy to implement and works well for many use cases.

- Semantic Chunking: A more sophisticated approach where frames are grouped based on their visual or contextual similarity, effectively organizing them into scenes. There are various ways to implement semantic chunking, such as using Convolutional Neural Networks (CNNs) to calculate frame similarity or leveraging multi-modal models like GPT-4o for pre-processing. By defining a threshold for similarity, you can group related frames to better capture the essence of each scene.

Once frames are grouped, they can be combined into image grids. This technique allows the model to understand the relation and sequence between different frames, preserving the video’s narrative structure.

The choice between fixed time intervals and semantic chunking depends on the specific requirements of the use case. In our experience, fixed intervals are often sufficient for most scenarios. Although semantic chunking better captures the underlying semantics of the video, it requires tuning several hyperparameters and can be more resource-intensive, as each use case may require a unique configuration.

With the growing capabilities of LLMs and increasing context windows, one might be tempted to pass all frames to the model in a single call. However, this approach should be used cautiously. Passing too much information at once can overwhelm the model, causing it to miss crucial details. Additionally, current LLMs are constrained by their output token limits (e.g., GPT-4o allows 4096 tokens), which further emphasizes the need for thoughtful processing and framing strategies.

Building Video Descriptions with Multi-Modal Models

Figure 2: Ingestion Pipeline of VideoRAG.

Once the frames are grouped and paired with their corresponding audio transcription, the multi-modal model can be prompted to generate descriptions of these chunks of the video. To maintain continuity, descriptions from earlier parts of the video can be passed to later sections, creating a coherent flow as shown in Figure 2. At the end, you’ll have descriptions for each part of the video that can be stored in a knowledge base alongside timestamps for easy reference.

Bringing VideoRAG to Life

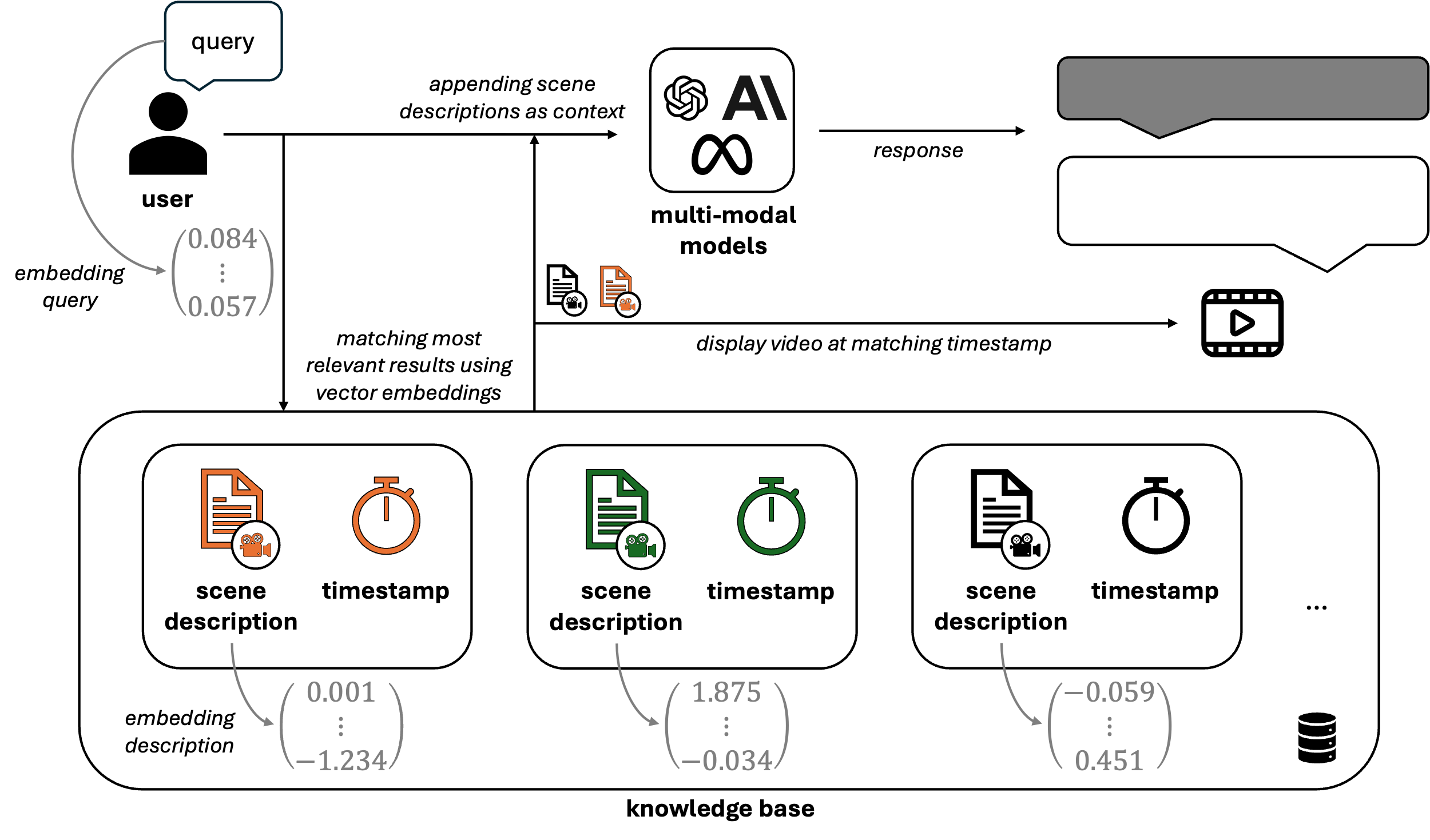

Figure 3: Retrieval process of VideoRAG.

As shown in Figure 3, all scene descriptions from the videos stored in the knowledge base are converted into numerical embeddings. This allows user queries to be similarly embedded, enabling efficient retrieval of relevant video scenes through vector similarity (e.g., cosine similarity). Once the most relevant scenes are identified, their corresponding descriptions are added to the prompt, providing the LLM with context grounded in the actual video content. In addition to the generated response, the system retrieves the associated timestamps and video segments, enabling users to review and validate the information directly from the source material.

By combining RAG techniques with video processing capabilities, companies can build a comprehensive knowledge base that includes both textual and video data. Employees, especially newer ones, can quickly access critical insights from older colleagues — whether documented or demonstrated on video — making knowledge transfer more efficient.

Lessons Learned

During the development of VideoRAG, we encountered several key insights that could benefit future projects in this domain. Here are some of the most important lessons learned:

1. Optimizing Prompts with the CO-STAR Framework

As is the case with most applications involving LLMs, prompt engineering proved to be a critical component of our success. Crafting precise, contextually aware prompts significantly impacts the model’s performance and output quality. We found that using the CO-STAR framework — a structure emphasizing Context, Objective, Style, Tone, Audience, and Response—provided a robust guide for prompt design.

By systematically addressing each element of CO-STAR, we ensured consistency in responses, especially in terms of description format. Prompting with this structure enabled us to deliver more reliable and tailored results, minimizing ambiguities in video descriptions.

2. Implementing Guardrail Checks to Prevent Hallucinations

One of the more challenging aspects of working with LLMs is managing their tendency to generate answers, even when no relevant information exists in the knowledge base. When a query falls outside of the available data, LLMs may resort to hallucinating or using their implicit knowledge—often resulting in inaccurate or incomplete responses.

To mitigate this risk, we introduced an additional verification step. Before answering a user query, we let the model evaluate the relevance of each retrieved chunk from the knowledge base. If none of the retrieved data can reasonably answer the query, the model is instructed not to proceed. This strategy acts as a guardrail, preventing unsupported or factually incorrect answers and ensuring that only relevant, grounded information is used. This method is particularly effective for maintaining the integrity of responses when the knowledge base lacks information on certain topics.

3. Handling Industry-Specific Terminology during Transcription

Another critical observation was the difficulty SST models had when dealing with industry-specific terms. These terms, which often include company names, technical jargon, machine specifications, and codes, are essential for accurate retrieval and transcription. Unfortunately, they are frequently misunderstood or transcribed incorrectly, which can lead to ineffective searches or responses.

To address this issue, we created a curated collection of industry-specific terms relevant to our use case. By incorporating these terms into the model’s prompts, we were able to significantly improve the transcription quality and the accuracy of responses. For instance, OpenAI’s Whisper model supports the inclusion of domain-specific terminology, allowing us to guide the transcription process more effectively and ensure that key technical details were preserved.

Conclusion

VideoRAG represents the next step in leveraging generative AI for knowledge transfer, particularly in industries where hands-on tasks require more than just text to explain. By combining multi-modal models and RAG techniques, companies can preserve and share both explicit and implicit knowledge effectively across generations of employees.